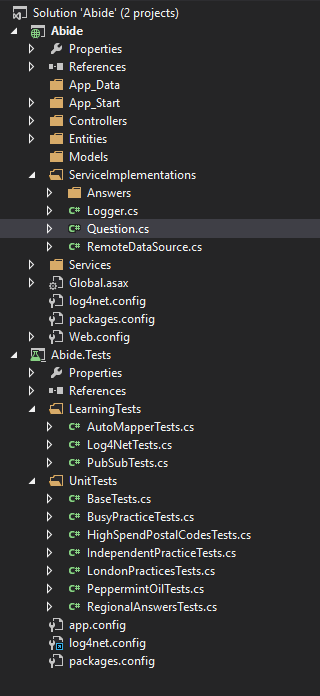

Intro and Pub/SubThis is post is going to be a little different. This time I'm going to show off a coding sample that I did for a company awhile back. The full code sample can be found here at my github. So the challenge was to create a solution that could process a remote file and use the data to answer a series of questions. Some of the things that I had to keep in mind were resource utilization, testing, ease of maintenance, and extensionability, add to be easy to add additional questions. Before I started I downloaded the files to get a feel for the data. Now I was told that the data was health care related, but was not told what the columns within the data meant, that was part of the challenge, so I had to do some additional research and infer as best I could. I found that one of the files was a CSV containing some information about medical practices within the UK. The second file, also CSV, was the prescriptions associated with each practice in a certain month. After some additional research I was able to a mapping for the columns in the second file to what they meant in the real world. Now armed with this information I was ready to start coding. As I was thinking about the structure of my code and what steps I would need to take I determined that there were two operations that I could not avoid. The first was that I had to process each file line by line in order to parse the data into something I could use in my code. The second was that I had to rerun the calculations for each question after each record was parsed. I could not just stop processing or skip certain objects since it could impact my calculations. Keeping the requirement of resource utilization in mind, one thing that I did not want to do was walk through each file line by line, parse that line, add that object to an in-memory collection, and then once I was done with the file walk the in-memory collection to do the calculations. I felt that would be bad for 2 reasons, 1) that I would be holding all of the data in-memory and 2) that would add many more operations (in addition to the two already identified) as I would have to walk the entire practice collection and the walk the associated prescriptions for each practice. With that in mind I decided that I wanted a way to do the calculations as soon as each record was done processing. My first thought was to use an event based model. I would raise an event as soon as each line was parsed. That would give me the benefit of redoing my calculations with each new object. The thing I didn't like was that I felt the event model would cause very tight coupling between the file that handled the data source and the file(s) that did the calculations. So I went looking for another answer. Finally, after some more thought and internet searching I landed on the Pub/Sub model. I chose the Pub/Sub model because it gave me the real time calculations I wanted without coupling my data source files to my calculation files. I added the Pub/Sub NuGet package to my solution (version 1.4.1 at the time) and was off to the races. Pub/Sub was super easy to implement. The package is a set of extension methods off of the base Object class so all of my entities had the publish and subscribe methods readily available to them. As you can see it was very simple to publish that an entity (practice in this example) was done being parsed. private void ParsePracticeFromStreamData(Stream downloadStream) { try { using (var stream = new StreamReader(downloadStream)) { string line = String.Empty; while (!stream.EndOfStream) { line = stream.ReadLine(); var prac = Mapper.Map<Practice>(line); this.Publish<Practice>(prac); } } } catch (Exception e) { _logger.LogException(MethodInfo.GetCurrentMethod().Name, e); } } In this method I'm taking the download stream and reading each line, using AutoMapper to map the CSV format to my entity and then Publishing the new Practice object. Since the publish sends out the practice object anybody that is subscribed to the practice will receive the practice object and can do whatever calculations they need. Subscribing to an event is just as easy. If one of the calculation files needed to handle practice data then in the constructor I would just subscribe like this. public BusyPractice() If a file did not need to do anything with the practice data it would not subscribe and then would not be notified. That's my Pub/Sub code in a nut shell. Using IoC to answer the questionsIn pretty much every application that I write I use Ninject as my dependency container and I use Dependency Injection as my preferred method of IoC. In this application I had a simple Ninject binding that took all the classes in the assembly (only one for this project) and bound them to their default interfaces, like this. private static void RegisterServices(IKernel kernel) While there is nothing really special about that code, what is special it how it helped me answer questions and allow for extensionability. Next, I created a very simple IAnswer interface with only one method on it, GetAnswerDictionary. IAnswer Interface public interface IAnswer As with my Ninject bindings, there isn't any special about that interface. What I did was inside my class that compiled all of the answers (the Question class) I used Ninject to get all of the classes that implemented IAnswer. Then I looped over each class and called the GetAnswerDictionary and then added it to the Question class's dictionary. private List<IAnswer> _answers; I felt that this gave me the extensionability that I wanted because now if somebody needed to answer a different question with the data all they needed to do was created a new class that implemented IAnswer, subscribe to the relevant publish (either practice or prescription) and then do the required calculations. Ninject would bind the new class to IAnswer and the question class would take care of calling that new IAnswer and passing the answers back to the user. By now we've seen how I dealt resource utilization and extensionability. So now let's talk testing. TestingI'm a big believer in and proponent of automated testing. I don't always follow TDD and write my unit tests first, sometimes I do but not always. Since this was just a coding sample, I didn't add integration tests, api tests, or as many unit tests as I would in a production application. As you can see from my solution explorer, I stuck with two types of tests for this project, learning and unit. What I call learning tests are tests that I write to learn how a 3rd party library works. I feel that since I would have to learn how this packages work at some point anywhere I might as well write some tests for them. The benefits that I get are 1) I learn the library or package 2) if I ever have to update the library I have a suite of tests that I can run to make sure nothing broke in the upgrade and 3) if I ever forget how something works I have a quick and easy reference that doesn't require me to hunt through my entire solution trying to find that one place I did that one thing. Here is a test that I wrote for testing my AutoMapper setup. [Test] Now I have a simple test to make sure that my custom converter works and maps each column in the CSV file to the correct property on the object. The learning tests that I did for this were not as full as would be required in a production application, but I felt they were enough for this. I have AutoMapper tests to make sure that the custom coverters work, I have Log4Net tests to make sure that my configuration is correct and that Log4Net creates a log file on the file system if one does not already exist, and PubSub tests that make sure I know how to correctly subscribe to a publish and how to publish something. Referenced below is my Log4Net test file. [TestFixture] My unit tests were about what you'd expect. I didn't mock any of the service calls, instead I published items to validation my business logic against the concrete classes. Here is an piece of my BusyPracticeTest file. [TestFixture] The actual frameworks that I used for testing were NUnit and FluentAsserations. I use NUnit for all my C# unit tests so that's what I chose for this project. I like the way FluentAsserations reads, I find that answer.Should().Be(...) is easy to read and understand than Assert.AreEqual(1, 2). That may just be my opinion, but that's why I went that route. Improvements I could makeSince this was just a code sample and not a production application there are things that I didn't do that I would do in production. I would have more tests and different tests, like integration and API end points. I would have a UI and would have worked to on getting the files to process asynchronously. For the integration tests I would probably take enough of the data that it would be meaningful, but small enough that I could verify the numbers by hand, and then store them in a local database. I would then use that data to verify my calculations. Write now I wrote the app as a Web API service with no UI. I did consider using AngularJS for my front end, but since the job was a back end job I didn't worry about having a UI. Processing the file asynchronously would probably be the largest performance improvement I could make. I didn't time it, but I believe processing both CSV files and answering the questions takes somewhere around 15-30 seconds. I started going down the async/await route but I ran into 2 issues. The first was an exception was being through if the web service end point returned while an async thread was still open and running in the background. The second problem was that it was possible, very unlikely but still possible, for the prescription to be parsed before its associated practice. Most of the time this wouldn't be an issue but there were some cases where it would matter. Since I didn't have a good solution for either of these I simply removed all async calls and did it the long way. Another improvement that I considered was adding SignalR. The benefit I saw from SignalR was that the UI could be updated in real time as each new calculation would run. That would give the appearance something was happening for the user, instead of nothing and then everything. The reason I didn't go down that path was because I've never used that library and felt that learning a new library just for this project was too much to take on right now. Final Thoughts / SummarySo while this isn't perfect code (is there such a thing) and I could think of plenty of improvements to make I'm happy with the end result. I felt that Pub/Sub gave me the decoupling and real time calculations that I wanted. I have a solid start to my automated testing efforts. And my IoC gave me the flexibility to add new questions/answers and swap out the remote data source for a data base, network file location or any other data source as needed. If you made it this far on this post thank you for sticking with me and as always. Happy Coding.

Sean Wernimont The Blind Squirrel Copyright 2015-2020

|

AuthorWelcome to The Blind Squirrel (because even a blind squirrel occasionally finds a nut). I'm a full-stack web and mobile developer that writes about tips and tricks that I've learned in Swift, C#, Azure, F# and more. Archives

April 2018

Categories

All

|

RSS Feed

RSS Feed